Dec 15th 2020

Webinar Recording and Transcript: Maximize ROAS Through LTV Powered UA Automation

Advertisers today measure the ongoing performance of their marketing efforts using D7 ROAS targets. AlgoLift by Vungle General Manager Paul Bowen gets more granular on how advertisers can generate outsized returns by measuring their ad strategy via accurately-predicted LTV.

In this brand new Vungle webinar, AlgoLift by Vungle answered four burning questions on how AlgoLift Premium helps advertisers hit their long-term goals, including “What’s the lifetime value of my users?”, “What’s the impact of my paid UA on my organic revenue?”, “How do I optimally allocate my bids and budgets from my marketing spend on a daily basis?”, and “How do I measure the performance of my campaigns and channels on iOS 14?”.

Vunglers in This Webinar

Paul Bowen

Paul has 20 years of experience working in digital advertising. He is the General Manager of AlgoLift by Vungle, the market leader in predicting LTV and user acquisition automation for mobile apps that was recently acquired by Vungle. Paul has also held VP roles at Unity and Tapjoy.

Matt Ellinwood

Matt Ellinwood is a Director of Product Management at Vungle where he leads product for the Demand Side Platform (DSP), Data Product, and Privacy initiatives. His ad tech experience has focused on data and performance products, including audience targeting, experimentation, and performance analytics. Prior to Vungle, he led Data Products at TubeMogul (now Adobe Ad Cloud).

Watch This Webinar Now

Webinar Transcript

A lightly edited transcript of our webinar follows.

Matt:

Hello everyone, and thank you for joining us today. My name is Matt Ellinwood. I am a Director of Product Management here at Vungle, and I’m happy to welcome you to our webinar, which is “Maximized ROAS Through LTV Powered UA Automation”. Before we get started, I’ve just got a few housekeeping items for us. We are recording this webinar and so we will be sharing a link once we’re done through email, so keep an eye out for that. We will also have a Q&A session at the end of the webinar. Please submit your questions at any time during the webinar through Zoom. There is a button at the bottom of the toolbar called Q&A. You can write in your questions then. And we will answer those at the very end of the presentation. We’re also going to be asking you to participate in three short polls during the webinar. We’ll be sharing those results as we go.

So to begin, Vungle acquired AlgoLift in October, and we’re extremely excited to add the AlgoLift platform to our offerings and to bring the fabulous AlgoLift team into the Vungle family. There are four themes of overlap between Vungle and AlgoLift that really highlight how aligned the companies are and why this is such a great match. Those themes are data insights, execution, and privacy. On data, both of our companies have an extremely data-focused approach and robust data science and ML (machine learning) solutions that use data at a very granular level to bring a specific value to our clients. Insights, we are both focused on using that data to bring insights to our clients. Insights that are derived from this data and unique, but also importantly provide actionable intelligence that our clients can actually use to drive the growth of their business.

Execution, we bring up because at Vungle we’ve long been focused on advertiser efficiency and bringing tools to help enable teams with their buys in managing and starting their campaigns. AlgoLift has a focus on execution and automation that we will look at today that really brings that advertiser efficiency promise into even greater relief. And then finally privacy. Privacy, which is becoming so much more important even than it was in 2020 as we move into 2021 and start to see and understand the full impacts of the changes with iOS 14. AlgoLift has privacy built into its absolute core of the product. And the insights that are derived from this data by this platform do so without ever touching PII (personal identifiable information), which means that you’re built from the ground up for advantages in iOS 14, but really with respect to any privacy centered regulatory scheme.

So when we look across these themes, the alignment between our two companies is incredibly strong and we’re extremely excited to be bolstering what it is that we focus on doing for our clients with this additional amazing range of capabilities, which will take a much more detailed look at today. So let’s look at the agenda. What we’re going to start with is learning a little bit more about AlgoLift’s background and where the product and team come from. And then we’ll dig into and spend the majority of our time on the problems that AlgoLift solves. And support understanding those problems and the solutions with some case studies that really illustrate how the platform works and what benefits are delivered. And we’ll finish up by taking a look at the onboarding process to understand what it takes to get started with this, and also show you how it is that you can actually work with AlgoLift to get started. So I’m extremely happy to introduce Paul Bowen, who is the GM of AlgoLift by Vungle, and Paul will take us through this great agenda. Thanks, Paul.

Paul:

Thanks, Matt. Really appreciate the kind introduction. So before we get started on the content, I’m curious to hear how familiar you are with AlgoLift. So let’s start with a poll question. “What’s your familiarity level with AlgoLift today?” Are you not at all familiar? A little familiar? Very familiar? Or you know everything. And if you know everything, why are you here? But yeah, just curious to hear the level of understanding within the audience. So it looks like around 79% of people are “A little familiar” and we have 11% who are “Not familiar at all”. So definitely some work to do during this webinar. I’m looking forward to sharing more about AlgoLift.

So to share more about AlgoLift and who we are, I’d like to introduce myself. So my name is Paul Bowen, I am GM of AlgoLift by Vungle. You can feel free to contact me at any time on paul@algolift.com. With regards to any content within this webinar, questions around the mobile app marketing space, I’m very open to connecting. So I’ve been with AlgoLift for 18 months now. Prior to joining AlgoLift, I was VP of account management at Unity for three years. And before that, I spent almost six years at Tapjoy in various VP roles. So I’ve been in the mobile app marketing and monetization space for 10 years and the digital advertising space for almost 20 years.

So to introduce a bit more about the company. So AlgoLift was formed in 2016. We’re based in [Los Angeles], and we’re a 20-person company. And as Matt said, we were fortunate enough to be acquired by Vungle in October 2020. And we’re super excited to see what that looks like into the future and bring our solutions to a larger audience of advertisers. So we’re the market leader in predicting LTV and user acquisition automation. We really focus on mobile apps, but we do have clients with web and console products. And if I was to highlight our USP (unique selling proposition), I’d first say that you can’t do good user acquisition without good LTV predictions. And even more importantly, you can’t do good user acquisition automation without good LTV predictions. And so AlgoLift really focuses on maintaining accurate LTV models for both gaming and subscription apps to help ensure our clients are getting the optimal returns for their UA spend, and they can plan their businesses for the long term.

So I’ve highlighted a few examples of clients that we work with. You’ll see that there are both gaming and non-gaming companies there. So before we move on, I’m curious to hear, we have another poll question. I’m curious what problems you need solving. So do you have challenges predicting future LTV of your users? Are you currently able to measure organic uplift? Do you see that as a big challenge right now? Are you able to optimize in the most effective way—your cross-channel and campaign bid and budget allocations? And how you think about iOS 14 and that problem there. So curious to hear your answers there.

So it looks like predicting LTV is the winner with 71%. But we also have 57% of people who also are thinking about iOS 14. So it looks like predicting LTV is the winner with 71%. But we also have 57% of people who also are thinking about iOS 14. Definitely not surprised about those two topics coming at the top. They’re definitely questions we get asked about a lot from future clients that we talk to. So let’s talk about the problems that we solve for advertisers. So I’ve highlighted four that we get asked often by our clients.

What problems does AlgoLift solve?

Predict Lifetime Value (pLTV)

The first is, “What’s the lifetime value of my users?” So you’ve acquired a bunch of installs. You want to know the future revenue that those installs will generate. And so AlgoLift accurately predicts the one-year LTV every day for every user within a mobile application. We help advertisers understand which campaign is performing. We help advertisers understand which channel is performing. We help them plan their businesses for the long term and operate them profitably.

Organic uplift measurement

The second question we get asked is, “What’s the impact of my paid UA on my organic revenue?” It measures the additional organic revenue driven by paid UA at the ad network and platform level. And so this helps advertisers determine where best to allocate marketing spend to maximize ROAS. It also gives them the potential to increase their payback window now [that] they can finally measure organic uplift.

Optimal cross-channel and campaign bid/budget allocation

The third question we get asked is, “How do I optimally allocate my bids and budgets from my marketing spend on a daily basis?” So our platform connects to manage APIs on leading ad networks and leverages our LTV models to programmatically distribute the optimal bid and budget allocation across both channels and campaigns to hit the advertiser’s long-term goal.

iOS14 measurement

So our platform really solves the hard math problems for humans that algorithms are better at tackling. And then lastly, and more recently, the question we’re asked around is, “How do I measure the performance of my campaigns and channels on iOS 14?” So leveraging our data science capabilities and user-level models, we’re able to provide measurement services for user acquisition campaigns on iOS 14. In a world where IDFA is effectively deprecated, our platform helps advertisers measure the predicted ROAS from their campaigns to help them continue UA as before.

Predicted LTV

So let’s talk a bit more about predicted LTV. So what’s the predicted LTV of my users? So before we get into predicted LTV, let’s talk about the problem. So the problem is D7 ROAS. And I’m sure this is familiar for many people watching right now. D7 ROAS is the industry-standard KPI for measuring the performance of user acquisition campaigns. And advertisers have D7 ROAS targets that they use to measure the ongoing performance of their activities. And that’s really how most UA has done today. So let’s take a moment to analyze how those targets are created—those D7 ROAS targets.

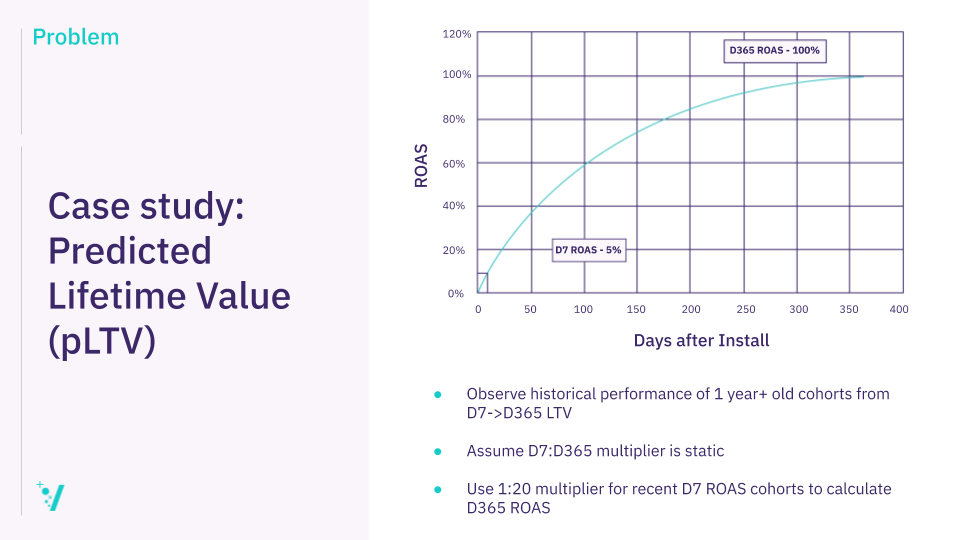

So I’m showing a graph (above) here with a ROAS curve for an install cohort from one year ago. So these installs have just had their first birthday—happy birthday, Installs. And now they’re fully mature based on a one-year, 100% ROAS target.

So by observing the revenue generated from this cohort over the last year, we can see that the D7 ROAS was 5%. So by observing the revenue generated from this cohort over the last year, we can see that the D7 ROAS was 5%. So what we see is the ratio between the D7 and the D365 ROAS is 1-to-20. If we multiply the D7 ROAS by 20, we get the D365 ROAS. We call this number, 20, our multiplier. If we’re using the multiplier method for our LTV model, we apply this multiplier to the D7 ROAS of recently-acquired cohorts of installs to predict the D365 ROAS.

So let’s take another example. We acquired a cohort of installs a week ago. Let’s say we’ve observed today that their D7 ROAS is 3%, we will then multiply this 3% by our multiplier 20 to get our expected D365 ROAS, which would be 60%. 20 times three is 60. So let’s recap again how we’ve got there. So we’ve observed the historical performance of a one-plus-year-old cohort from D7 to D365. And then we use that for recently-acquired cohorts to calculate our future returns from that cohort of installs.

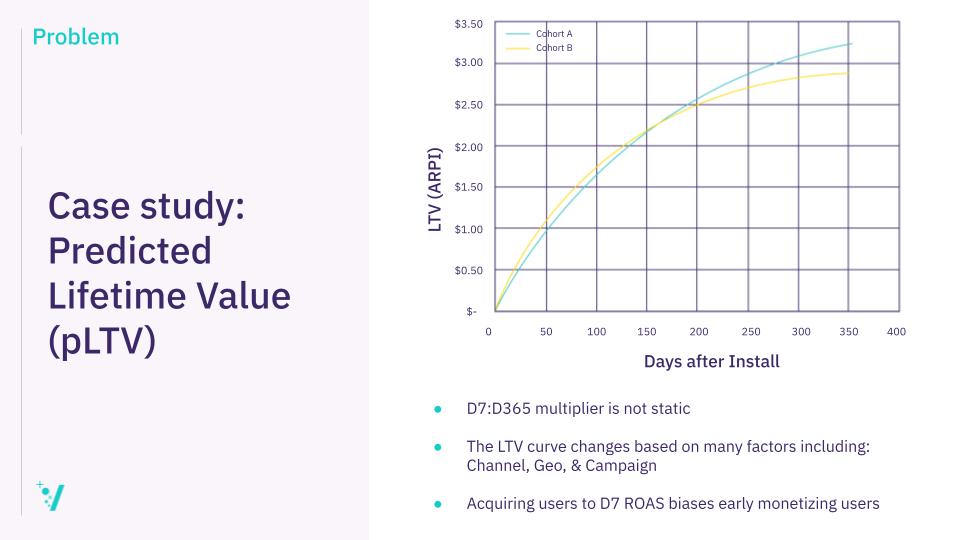

So what’s the problem there? So the problem is the ratio between D7 and D365 is not static. So LTV curves really change on many factors including channel, geo, campaign, etcetera. And acquiring D7 ROAS users biases early monetizing installs.

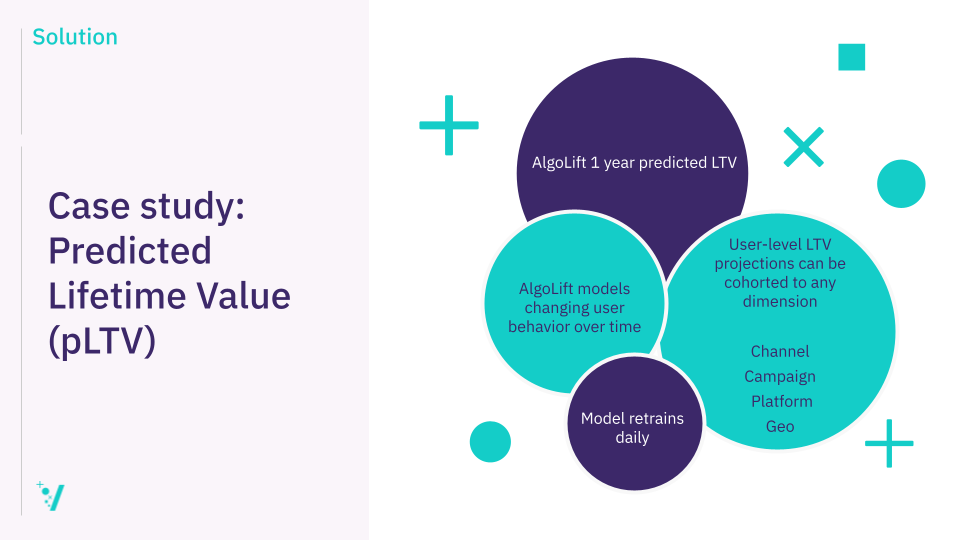

So the solution, let’s talk about what that is. So AlgoLift one-year predicted LTV is updated every day for every user. The data set that we use to predict this LTV is attribution data, revenue data, and in-app engagement data. So the attribution data is the data generated by an MMP (mobile measurement partner) on the install. So that’s the channel data, campaign data, geo, platform, all reported against a non-PII user ID. So that’s not IDFA. That’s not Google ad ID. This is an advertiser-generated ID when a user installs the application. Revenue data, so that’s in-app purchase data, ads data, subscription data, all at the user level, and then in-app engagement data.

So for example, with a game, it may be something like level complete, Facebook connect, Guild membership in a strategy game. In a meditation app, it might be something like number of meditations every day. So we make a user-level LTV prediction every day for every user and we share these predictions back with our clients. The benefit of user-level LTV predictions is they can be cohorted to any dimension. So if you want to compare Facebook vs. Google vs. Vungle vs. Unity, you’re able to do that. You’re able to aggregate user-level predictions up at the channel level. You’re able to see how one campaign compares to another campaign. You’re able to look at the geo level as well. “How is my U.S. cohort of installs comparing to my China installs?” And then platform as well. So any dimension on which you’re reporting users, you can cohort that data up.

So unlike the static model for D7 ROAS, we’re not assuming static user behavior over time. So our models account for changing user behavior over time, and our model is also retrained daily automatically. So there’s no need to manually recalculate anything, for example, the multiplier. That also means that we pick up product changes. So say you’re a game developer and you make a game update, our models will automatically pick up changes within the game based on users engaging with that new content. We also pick up changes in the market seasonality. So if there’s a competitor launch, that may be reflected in the pLTV as well, based on the fact that we’re retraining these models every day.

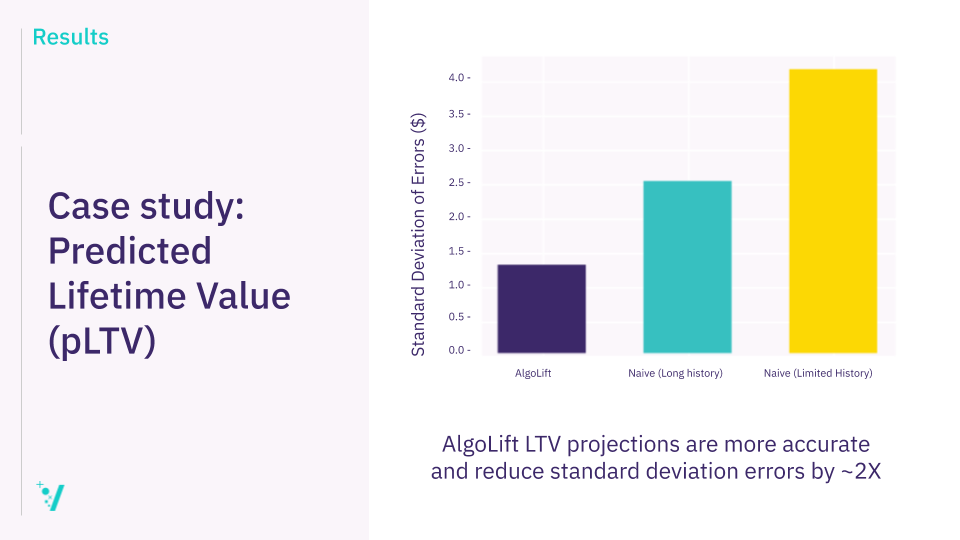

So the results. So I guess the question is, “how much better are LTV projections than D7 ROAS?” So to answer this, we can compare LTV predictions made at D7 in the user’s lifetime to the multiplier approach. So the error metric that we’d choose to use to measure the results here is absolute error. So that’s the difference between the predicted LTV and the actual LTV in dollars. And if we were to take a random sample of 1,000 users for each—taken from a larger sample of users—what we find is the naive multiplier model. So that’s the D7 ROAS model, [which] has a much larger standard deviation of errors, up to 2X, when we have a long history of installs to train that model on compared to the AlgoLift LTV model. When we have a much-limited historical data to train the D7 ROAS model on, the standard deviation of errors is much higher.

So as you can see on the chart (above), on the right-hand side in yellow, that’s up to 4X different. So the AlgoLift model is far more accurate than a D7 model in terms of predicting LTV.

Organic Lift

Organic uplift: So advertisers asked us, “How do I quantify the impact of my paid UA on my organic revenue?” It’s a common question and most app developers who try to quantify this have a very simplistic approach to this. It’s a really hard problem to solve, for sure. So the problem is these user acquisition teams spend millions of dollars on paid UA, and they’re not directly credited for incrementality. So there’s hidden virality or word of mouth, position in the app store charts, or incomplete measurement. All these factors add to this sort of organic uplift that happens.

Organic uplift is really hard to measure. Differentiating the signal from the noise is challenging and it requires significant data science capabilities to be able to do that. Furthermore, gaining access to enough data points to build a reliable model is out of reach for most developers. You really do need billions of installs and impression events to be able to understand signal vs. noise effectively and build a robust model here.

So the solution. So AlgoLift’s organic lift quantifies the additional organic revenue generated by paid UA at the ad network and platform level. We sampled 25 billion paid impressions and 5 billion records of installs and revenue data to generate this model.

So you can see on this chart (above), we’re adding a multiplier to the D30 ROAS. So on this chart, we’re looking at a D30 projection here. And we’re adding an organic lift multiplier to the chart. So the model adds a 13-point increase in predicted ROAS at the app level. We can then use filtering capability here to drill down to see what that looks like at the ad network or platform level. That’s the output of the model—a multiplier on the predicted ROAS—whichever window you’re buying across. So the results. So AlgoLift outputs a multiplier to the ROAS to quantify the uplift at the ad network platform level. And we have several clients who then consider this multiplier in their automated channel budgets. This then increases their ROAS by up to 30%. So when we’re accurately able to account for organic uplift, we’re able to improve ROAS by up to 30%.

UA Automation

UA automation. So the question we get asked is, “How do I ultimately allocate my bids and budgets across channels and campaigns to ensure I get the best long-term returns?” So the problem. So UA managers and analysts are trying to balance ROAS vs. spend using [Microsoft] Excel. So you download your ROAS reports and then you either sort the poorest performing D7 campaigns and pause the worst ones, or you try to give more budget to high-performing campaigns without knowing or predicting what the elasticity of those campaigns will be. How much more budget can I give to this campaign before I see diminishing returns? They’re both challenges that UA managers try to solve manually.

The other challenge is that most UA teams are using D7 ROAS goals, not long-term goals, for example, D180 or D365. Few are taking into account long-term returns when they’re making these manual decisions around how to optimize campaigns. And it’s really hard for UA teams to do that in a manual way. They’re also manually inputting bids and budgets on these ad platforms. So once their LTV model has outputted some form of bid or budget recommendation from their naive model, the UA manager needs to then input this into the ad network. This likely won’t adhere to ad network best practices, and the changes won’t be made at the best time based on the data accumulated and the predicted returns.

There’s also the problem of decision paralysis on small cohorts. So smaller cohorts or campaigns are really challenging to treat. Using a cohort model, it can be unclear what the underlying behavior is that’s driving returns from a small cohort or campaign. Is one user driving outside returns for the cohort? How do you decide how much budget to give that campaign? How long do you wait to gather data on a sub-publisher before you lower the bid or pause spending on that sub-publisher? These questions can be paralyzing for a human. So all of these add up to suboptimal cross-channel campaign budget and bid allocation. And that’s why focusing on a platform that solves this, so allowing humans to focus on strategic thinking and thinking creatively is optimal. But really laborious, tedious tasks that require a ton of math that’s the best problem solved by machines.

So the solution. So AlgoLift’s fully AI-powered portfolio bid and budget UA management platform uses algorithms to predict future campaign performance. The AlgoLift platform makes a pROAS (predicted return on ad spend) calculation for every ad set, campaign, keyword, sub-publisher, and then uses portfolio theory to determine the optimal spend allocation to hit the client’s long-term goal. Then leveraging ad network manage APIs, our platform programmatically adjust bids and budgets at the lowest granularity possible to allocate the optimal spend distribution. So we’re focusing on Facebook, Google, Apple Search, Vungle, Unity, Applovin, and ironSource today.

Our clients target their portfolio of campaigns across ad networks towards a long-term ROAS goal such as D180 or D365 payback. We’re never looking at D7 ROAS, although D7 ROAS is a component of D180 or D365 ROAS, obviously. So this means their portfolio of ad networks is always being optimized towards their true business goals. Because our platform is fully programmatic, we’ve encoded the ad network best practices into the changes that we make to ad sets, and campaigns, and some publishers. So we’ve worked closely with the marketing science teams from Facebook and Google to build a robust set of rules that our platform follows to ensure their platforms work optimally. I.e. their algorithms are not constantly in learning mode. That’s a key piece for ensuring performance on Facebook and Google.

So our platform empowers UA teams to focus on high-value initiatives, not mundane tasks. Humans have context. They can strategize. They can think creatively. Adjusting bids and budgets on our platforms is a mundane laborious task for humans to complete. Our platform allows them to focus on their initiatives that humans are better than algorithms and machines at completing.

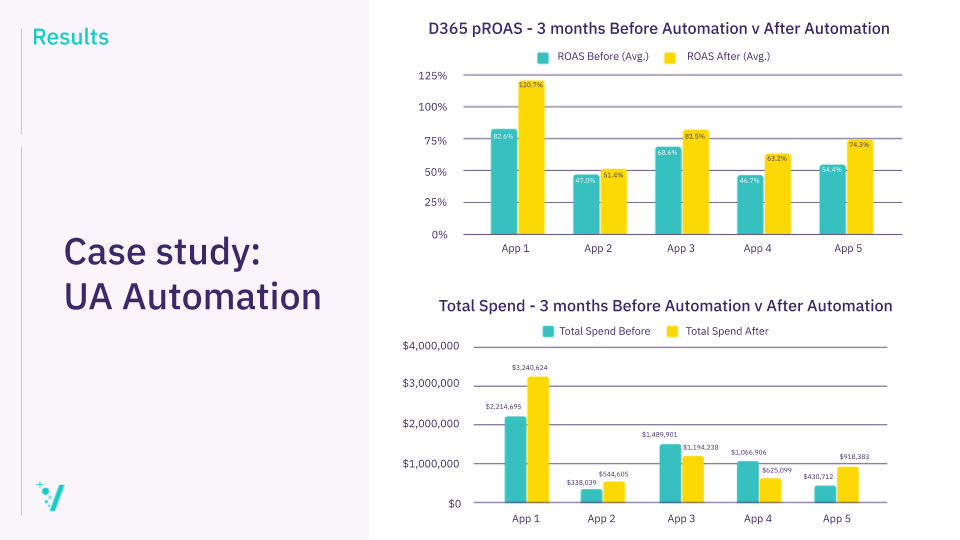

So the results. So I’m sharing here (above) a chart of performance from a developer that we engage with on our UA automation solution. It’s showing at the top, the D365-predicted ROAS three months before and after automation, and it’s showing performance for five different apps. So you can see in the teal, the performance three months, and then in yellow, their performance after predicted ROAS and then total spend.

So a few takeaways from this graph. One is that all apps saw a positive increase in D365 predicted ROAS after using AlgoLift’s automation solution compared to the D365 pROAS before. As you’ll see on the graph, the largest change was on “App 1.” That saw an increase in 40 percentage points. This was while the spending increased from $2.2 million to $3.2 million in the three months prior and after engaging with AlgoLift. The D365 predicted ROAS of the portfolio went from 68% to 96% across all the applications from the developer. So that’s a 28-percentage-point increase. So a pretty significant increase and certainly one that the developer was very happy with.

iOS 14 Measurement

So let’s talk about iOS 14 measurement. How do I measure the performance of my campaigns and channels on iOS 14? [iOS 14] is a hot topic right now. I think it’s one that many app developers are thinking about. In a world where we don’t have IDFA, what do I do? So let’s talk about the problems. So as of now, it looks like IDFA has effectively been deprecated. IDFA access is now opt-in. Facebook has announced that it won’t be asking permission to act as the IDFA. And we can expect other large ad platforms to follow. This means that we can’t match app installs with campaigns. So the idea of matching an install IDFA with a click or view IDFA is no longer possible where users don’t share their IDFA.

Related Article: Webinar Recap: An Inside Look at iOS 14 Preparation

And then post-install tracking is going to be limited by SKAdNetwork’s conversion value. So we’re used to having a rich campaign-level view of the user journey from both a revenue and in-app engagement perspective. This is going to be severely degraded with iOS 14.

So the solution. So AlgoLift has built a model that requires two data sets. We can get these from either the MMP or directly from the advertiser. The first data set is the SKAdNetwork data. So this is the API supplied by Apple. If you haven’t already started integrating this into your apps, I’d recommend you do this today. Without it, you’ll be unable to track installs where users haven’t shared their IDFA when Apple pushes out their changes early next year.

The second data set that we need is the anonymous in-app user-level data. So this is the data set that AlgoLift leverages to predict LTV today. It’s the post-install revenue and engagement events all reported against a non-PII user ID. So that’s not IDFA. The ID is an advertiser-generated ID for that user. So what the model is doing is it’s giving every app install a campaign membership probability of between zero and 100%. So we’re making a prediction that the install came from every campaign and that they were an organic install as well. So the important thing to note about this model is that we’re not trying to recreate attribution as it is today by building a one-to-one mapping between the install and the campaign. But we’re trying to work out where the user might have come from. The probability that a user came from somewhere has to add up to 100% so we can effectively account for that user in all the campaigns and the organic channel.

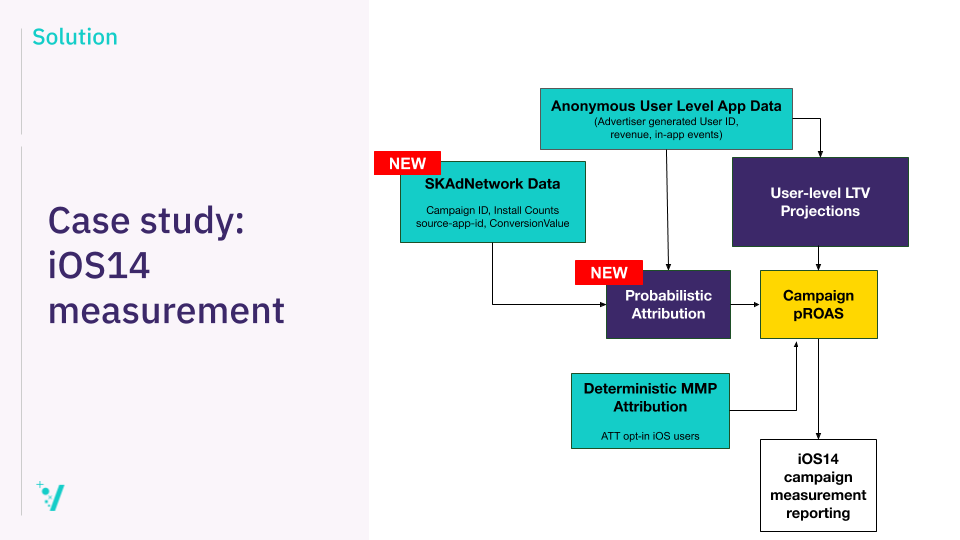

So let’s look at the model. So here you can see that I’m sharing the model (above). There [are] two data inputs to the model. One is the anonymous user-level app data. So that’s the in-app revenue and in-app engagement events all reported at the user level against an advertiser-generated user ID. So that’s the data set that we use to predict the LTV today already. A new data set into this ecosystem is the SKAdNetwork data. So this is data that we get from Apple. So campaign ID, install counts, the source app ID, and the conversion value, most importantly. So really, what we’re trying to do here is find users within this dataset who have this conversion value.

So that’s what this model is trying to do. It’s trying to find users that [have] recently installed the application. And then leveraging this probabilistic attribution model, which outputs the campaign membership probability for every user who installs the app. We then combine that with the user-level LTV predictions to output a campaign pROAS. I think important to note here is that this model continues to onboard deterministic MMP attribution. So where we know the attribution of a specific install, we should continue to onboard that data. But what this model is not doing is training on this data. So this is not a supervised model that’s in any way learning from this data set. This model (“Probabilistic Attribution” above) is independent of this data set here (“Deterministic MMP attribution” above). And the output of the campaign [ROAS model is a daily report of campaign pROAS.

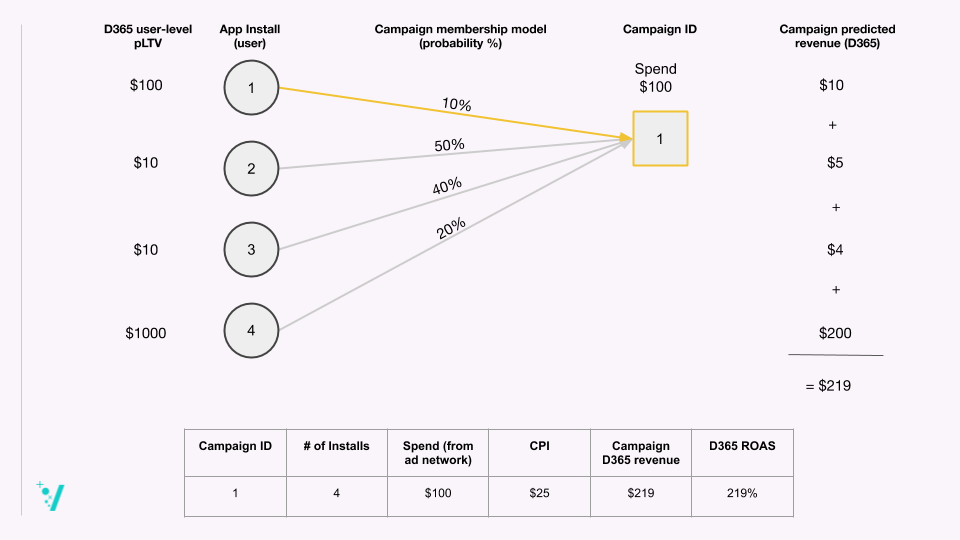

So let’s talk about exactly how the model works because it’s interesting and I think it’s a smart solution to a really hard problem. So let’s say we have four installs into an app—App Install 1, 2, 3, 4. AlgoLift makes a user-level LTV prediction every day for those installs. So in this example, we’re making a D365 user-level predicted LTV. So App Install 1 has a prediction of $100 dollars at D365. So the AlgoLift probabilistic attribution model assigns a campaign membership probability for each app install, for each campaign, and for the organic source. So in this example, I’m just showing one campaign, but you could imagine that there are tons of campaigns and the organic source where we have to assign a probability.

So App Install 1 has a 10% probability of coming from Campaign 1. So again, what we’re using to generate these probabilities is the SKAdNnetwork data and the anonymous in-app user-level data. They’re the two data sets that we’re leveraging to output this campaign membership probability. If we then multiply the predicted LTV by the probability, we get a campaign predicted revenue contribution for each app install, for each campaign. So you can see that we multiply $100 by 10% and we get $10. If we do that for every app that has an app install that has a probability of coming from Campaign 1, we get the campaign predicted revenue at D365. So we sum up all the predicted revenue contributions from each app install and we get the campaign predicted revenue at D365.

Let’s assume, for this example, the spend to acquire those four installs was $100. We can then do a little bit of math. So Campaign 1 had four installs. It had $100 worth of spend to acquire those four installs, so the CPI was $25. We know that the campaign predicted revenue is $219 because we worked that out above. And so our D365 ROAS, or predicted ROAS, is 219%. So that would be a high-performing campaign. So we’ve tested how this model performs versus the deterministic attribution. For these tests, we consider deterministic attribution from the MMPs to be the source of truth. And then we compare the probabilistic model against that. So the AlgoLift model delivers a 92% level of accuracy of predicting revenue from the campaign. This is a pretty incredible result, given we’re unable to match an app install to a specific campaign, but we are still able to predict the future revenue from that campaign. So in a world where we’ve lost the one-to-one mapping of installs to campaigns, this solution can help us optimize campaigns as we do today.

So that’s it for the problems that we solve for mobile app advertisers. A little bit about how you might engage with AlgoLift. So we offer a three-month proof of concept engagement. And really their idea of that is to ensure that clients are comfortable with the service that we’re offering them, that they’re getting value, they enjoy working with us. For that proof of concept, we agree on success criteria. So [there are] a few different ways that we agree on success. One can be LTV model accuracy. So we can output predictions and we can tell you how accurate those predictions are for your specific application. We can run an automation proof of concept. And so an agreed success criteria there may be ROAS improvements. And then lastly, we have an iOS 14-ready proof of concept that we’re working on right now.

So a bunch of clients [is] really interested in ensuring that they’re ready for iOS 14 and set up for success when Apple pushes out its changes early next year. So we have a white-glove onboarding service. Integration takes around two weeks. We’d love to work with you. [There are] details on the end about how you should get in contact if you’re interested in finding out more. But for now, I’ll probably end by asking a poll question. So curious to hear how familiar you are with AlgoLift now after I’ve shared all that content. It looks like “Very familiar” has won it by quite a lot. So it looks like I did an OK job. But now, Matt, I’ll pass it back to you to just wrap up things.

Matt:

Great, thank you, Paul, very much for taking us through the details of the AlgoLift platform. I just want to wrap up with a few key takeaways. We’ve learned about how AlgoLift approaches predicted LTV, how it delivers on measuring for organic uplift, the benefits of UA automation, and then also some time spent on a new solution for the challenges of iOS 14. Our goal is really to be the trusted guide for growth and AlgoLift really fits into helping us advance this goal. And that the key benefits that we see are wrapped up in delivering to you some performance insights about where your highest value customers are coming from. But also, as Paul highlighted, the importance of understanding how that may be changing over time and giving you the ability to respond to those changes to truly optimize your acquisition strategy.

And that folds into optimizing your ad spend. So having the right campaign and bid strategy that’s based on these data and insights across your channels, across your geos, across your campaigns, so that you are really investing in and buying the right users to grow your business profitably. And the last thing that I want to highlight is that this solution from AlgoLift is truly cross-channel in that it has the ability to ingest and produce predictions from all of your channels, from all of your spend, and to really deliver on optimization across the entire buy—not just on Vungle—which is a benefit and a value that we’re really excited to bring to you.

If you’ve got a problem that our solution can help with, then please get in touch by filling out the form below.