Feb 5th 2019

5 Questions to Ask When Picking a Mobile Fraud Solution

Back in the first chapter of this three-part blog series, we covered how Vungle currently tackles mobile ad fraud. As mobile fraud becomes more sophisticated, understanding its solutions becomes more difficult.

While we believe the responsibility lies with us to make sure fraudsters aren’t abusing our SDK, other companies — whether they’re mobile marketers, mobile attribution partners, or third-party fraud analytics solutions — are also looking at ways to fight mobile ad fraud. To help you stay healthy on the Vungle network, here’s a list of questions to ask when either building an internal fraud detection tool or considering an external fraud solution.

➊ At what level is the data being evaluated?

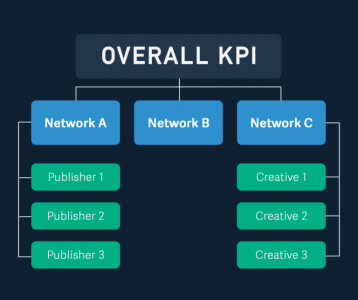

Fraud solutions will aggregate suspicious activity at different levels — network level, publisher level, creative level, install level, device level, and so on. It’s important to understand how different fraud types correlate to the data level you’re looking at.

Examining data at the publisher level is most effective, since black hat publishers are the most typical perpetrators of fraud. However, patterns at the network or creative level sometimes uncover issues that could also be flagged as fraud. Are clicks set up to be fired at the wrong engagement point in the dashboard (network level)? Do the creatives feel like clickbait, and can they be perceived as click spamming (creative level)?

Some solutions solely focus on the device and install levels. While these can be effective ways of flagging fraud, you have to grasp the statistical significance of your data within a larger context. For example, when we look at click-to-install time (CTIT), anomalies might result from users downloading an app within the first 20 seconds or past the first 24 hours. Users may have never opened the app even though they already had it installed at the time of the click (short CTIT) — or users may have downloaded the app from the ad but didn’t open the app until days later (long CTIT). So be wary of this type of analysis, since it’s subject to false positives.

➋ Is the fraud solution preventative?

To effectively reduce fraud in the ecosystem — to actually prevent it — we need to remove the incentive. We need to make sure fraudsters don’t get paid. With this in mind, let’s walk through some common tools available to a developer:

Rejected installs and attributions

What is it? The ability for attribution providers to identify fraudulent installs in real-time. Once identified, providers stop the install notification from being sent to the network.

Is it preventative? Yes. By preventing the fraudulent installs from reaching the network, we prevent the fraudster from getting paid, removing their incentive.

Things to consider: This method is only successful if real-time determination is accurate. If installs are rejected on the basis of arbitrary thresholds such as “CVR>2%” then this tool immediately becomes worthless.

Fraud detection reports

What is it? Any report identifying a group of installs or publishers as fraudulent. As we mentioned above, you should consider the granularity of these reports.

Is it preventative? If accurate, these reports have some potential to be preventative. They can be given to networks for review on a publisher-by-publisher basis so bad actors can be quickly identified and shut down. However, in order for this deterrent to be effective, the process must happen very quickly — otherwise, fraudsters will cash out long before being discovered.

Things to consider: Install-level reporting will be very difficult to act on. While you as the advertiser may get refunded for some installs, fraudsters meanwhile will not be deterred.

Suspicious install reports

What is it? Any tool or report that highlights installs or publishers without making a call on whether they are fraudulent.

Is it preventative? This is perhaps the least useful tool available to advertisers, since it leaves them to make the final decision on flagged activity. Without a responsibility to remain accurate, devs can generate false positives. This puts a lot of strain on them and their relationship with the network as they try to walk on the eggshells between suspected and actual fraud.

Things to consider: If inconclusive reporting is to be preventative, they need to be actionable. Consider working closely with the network to understand the common suspicious install types you’re seeing. Often there may be duplication with the networks’ own fraud detection tools.

➌ Does the fraud solution use dynamic or static thresholds?

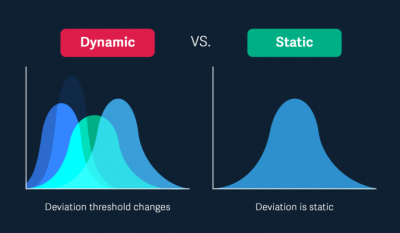

Fraudsters are becoming more and more sophisticated with their methodologies. A static threshold that blocked fraud schemes at one time may become outdated and gamed farther down the road. With dynamic thresholds, fraudsters face a moving target that becomes harder to game, helping fraud solutions prevent new, emerging types of fraud.

For example, if we use the CTIT threshold of 90% of installs required within the first hour, fraudsters may hover below the 85% mark to avoid being caught. If the metric is CTR, slithering right under that threshold could be the new tactic. Static thresholds are especially susceptible to gaming, but the more dynamic your threshold is — the more it relies on statistical deviation — the better you can identify anomalies and fraud trends.

➍ What’s the likelihood of false positives and false negatives?

First off, let’s define false positive and false negatives.

False negative: Fraudulent installs or attributions are not caught and are included in your campaign analysis.

False positive: Real installs and correct attributions are falsely described as fraud and are excluded from your campaign analysis.

Any report will inevitably have some false readings. UA managers are commonly more concerned with false negatives. While we’d love to live in a world of zero false negatives, capturing every single fraudulent attribution out there, we risk false positives with overzealous policing.

False positives can be dangerous — especially if you’re preventing attributions and postbacks to networks. This will effectively lower your waterfall positions in networks and stifle any accurate marketing performance analysis. Preemptively blacklisting or shutting down a publisher who is actually a quality source can spell disaster for marketing managers and their business relationships.

When facing a possible false positive, ask yourself:

- What is the normal/expected KPI? How far is the threshold from the actual?

- Are there instances in which a real install/real attribution could still fall into that fraudulent threshold?

➎ How transparent is the fraud solution about their methodology?

In order to best communicate issues with your network partners, you must have a strong understanding of the fraud detection methodology. This helps you implement our recommendations and also have transparent conversations with your advertising partners.

Any fraud solution is only as good as your understanding of its functions and how it relates to marketing data. Being able to answer these questions will keep you protected. Only then can you truly trust your numbers and separate the wheat from the chaff.